A little bit about me!

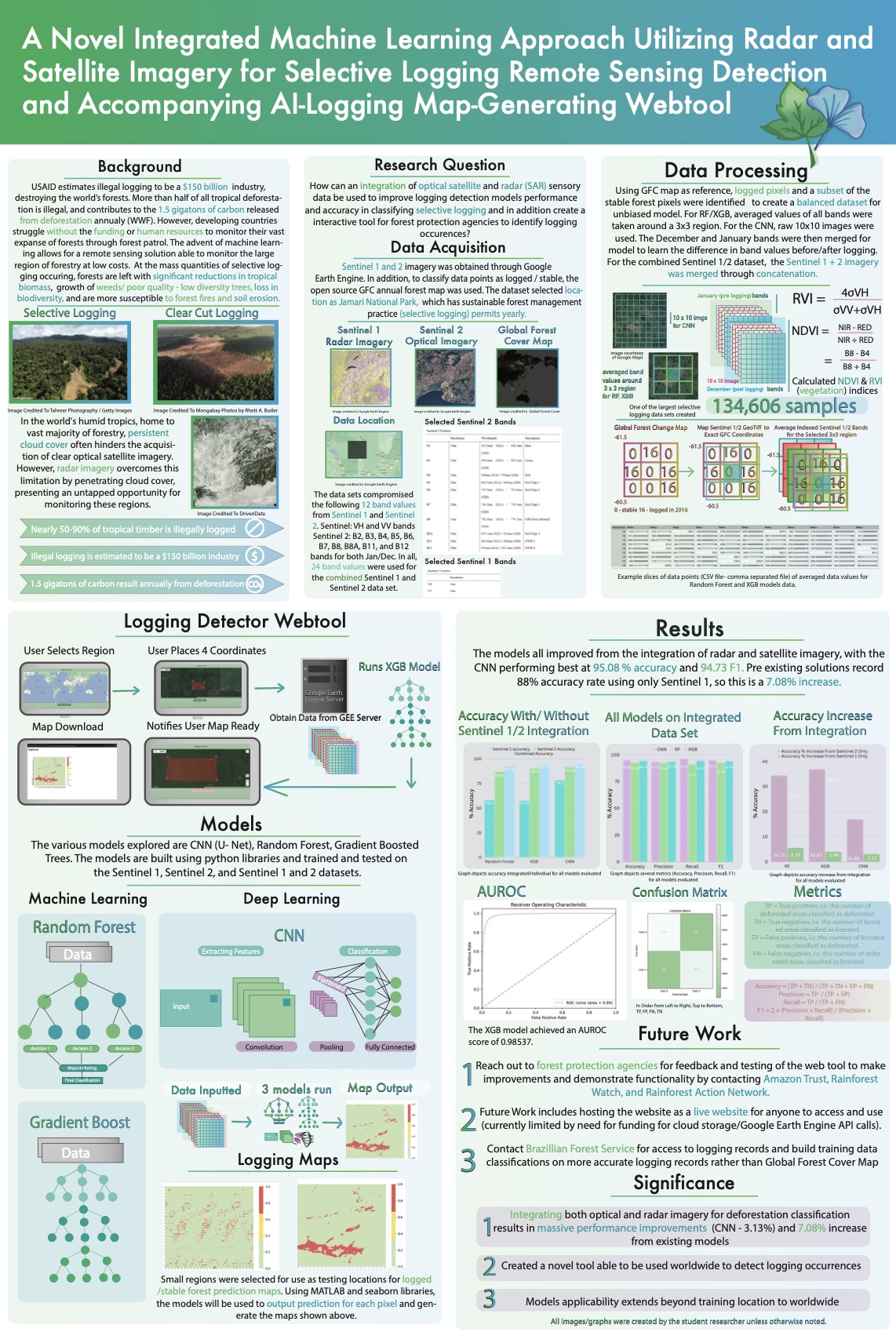

I developed a way to more accurately identify illegal logging because developing nations struggle to stop illegal deforestation. To detect it in real time, some stakeholders use satellite optical imagery, but savvy criminals have switched from clear cutting to removing only the larger, more valuable trees, which makes the logging more difficult to detect. Additionally, optical imagery requires cloud-free conditions, which is often not the case in the humid tropics. I found that I could help compensate for cloudy weather by combining satellite optical and radar datasets, since radar imagery is not affected by clouds. I tested various machine learning approaches and determined which was most accurate for this application.

PoweringSTEM is a 501c3 profit I founded to bridge the STEM education gap, providing underrepresented communities with resources, mentorship, and exposure to science and technology, empowering future innovators by democratizing STEM accessibility. We've organized medical, coding, and engineering camps reaching 2k+ participants & created a tutoring network assisting students w/ standardized testing + school subjects.

I oversaw all organizers and founded initiative of two hackathons held in Dec '22 & Oct '23. Secured $70k worth in prizes from Google, Wolfram etc. Arranged tech talks, beginner workshops, and industry speakers. Built the website poweringstem.com for hackathon and marketed hackathon extensively. We hosted 670+ participants from 38 countries